Starting from today I want to share part of my knowledge about SoapUI (as promised at the end of the post http://googlielmo.blogspot.ie/2013/12/dance-into-groovy.html). I used this tool for some years in order to perform load testing and integration testing and I found it really helpful.

SoapUI is a tool that allows any kind of functional and not functional testing. Despite its name it is not limited to the SOAP protocol and to web services only: you can perform tests for different protocols (HTTP/S, REST, JDBC, AMF, etc.) and application layers. It was born to be used for SOAP web services testing, but then it evolved to be a general purpose testing tool.

It comes in two editions, Open Source and Pro. The first one is free to use and you can modifiy the source code too, according to the LGPL license. The second one is commercial and closed source. In this post and the following I will refer to the Open Source edition. The companies I worked for in the last years didn't want to buy the Pro licenses even though they were happy with the benefits gained using this product, so my experience of usage against real systems is based mostly on the OS edition. Based on this I can assert that this edition can cover the most significant part of system testing. Furthermore, SoapUI is very flexible and can be extended through scripts, using a language like Groovy.

SoapUI is written in Java, so it is platform independent. The installation is very simple: go to the offiicial website (http://www.soapui.org/) and download the release for your OS. The only prerequisite for this tool is a Java Runtime Environment. You can choose between installer packages incuding a JRE or not (if you prefer to use the one already installed in your system).

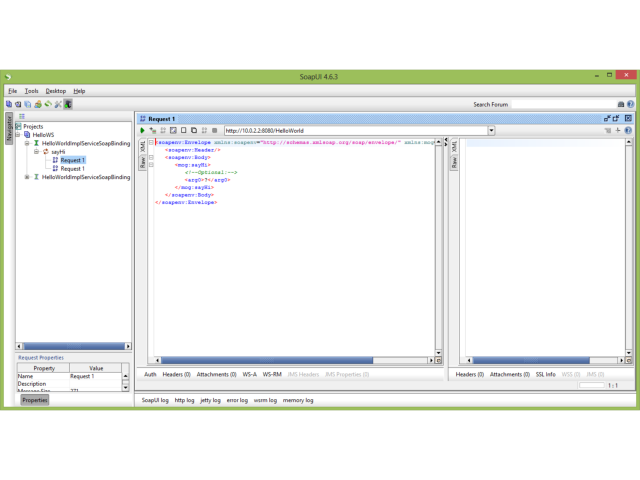

SoapUI comes with a really user-friendly User Interface. This way it is easy to quickly create and execute any kind of automated test through a single point of access.

SoapUI provides also other features, like web service mocking, security testing and recording.

The large Open Source community for it accelerated the integration with other popular tools. So you can find plugins for the most popular Java IDEs (Eclipse, NetBeans, IntelliJ) and for other helpful tools for automation (Jenkins, Maven, Ant, etc.).

In the next post we will see the anatomy of a SoapUI project.

SoapUI is a tool that allows any kind of functional and not functional testing. Despite its name it is not limited to the SOAP protocol and to web services only: you can perform tests for different protocols (HTTP/S, REST, JDBC, AMF, etc.) and application layers. It was born to be used for SOAP web services testing, but then it evolved to be a general purpose testing tool.

It comes in two editions, Open Source and Pro. The first one is free to use and you can modifiy the source code too, according to the LGPL license. The second one is commercial and closed source. In this post and the following I will refer to the Open Source edition. The companies I worked for in the last years didn't want to buy the Pro licenses even though they were happy with the benefits gained using this product, so my experience of usage against real systems is based mostly on the OS edition. Based on this I can assert that this edition can cover the most significant part of system testing. Furthermore, SoapUI is very flexible and can be extended through scripts, using a language like Groovy.

SoapUI is written in Java, so it is platform independent. The installation is very simple: go to the offiicial website (http://www.soapui.org/) and download the release for your OS. The only prerequisite for this tool is a Java Runtime Environment. You can choose between installer packages incuding a JRE or not (if you prefer to use the one already installed in your system).

SoapUI comes with a really user-friendly User Interface. This way it is easy to quickly create and execute any kind of automated test through a single point of access.

|

| SoapUI user interface |

SoapUI provides also other features, like web service mocking, security testing and recording.

The large Open Source community for it accelerated the integration with other popular tools. So you can find plugins for the most popular Java IDEs (Eclipse, NetBeans, IntelliJ) and for other helpful tools for automation (Jenkins, Maven, Ant, etc.).

In the next post we will see the anatomy of a SoapUI project.

Comments

Post a Comment